Tokens can’t track AI cost at scale

When Thomas Edison built the first electrical systems, they worked—but they didn’t scale because direct current (DC) couldn’t travel more than a mile.

Nikola Tesla solved that with alternating current (AC). Same electricity—different system—and suddenly power could go the distance.

AI cost is hitting the same wall. Tokens can’t “go the distance” agentic AI requires.

Like DC which failed at scale, tokens worked—until they didn’t.

Here's the difference between a traditional AI workflow and an agentic workflow:

| Workflow A: Simple prompt/response | Workflow B: Agentic workflow |

|---|---|

|

1. User prompt: “Summarize this document” Tokens generated: 25 Compute: single inference pass |

1. User prompt: “Summarize this document” Tokens generated: 25 Compute: initial inference |

|

2. Model produces summary Tokens generated: 200 Compute: single inference pass |

2. Agent retrieves 5 documents for context Tokens generated: 0 Compute: embedding + vector search + ranking |

|

Total tokens: 225 Actual work: 1 pass |

3. Agent evaluates relevance, discards 3 docs, keeps 2 Tokens generated: 0 Compute: multiple inference passes |

|

4. Agent attempts first summary draft, determines it is insufficient Tokens generated: 0 Compute: inference pass |

|

|

5. Agent retries with a different prompt strategy Tokens generated: 0 Compute: inference pass |

|

|

6. Agent calls external metadata tool Tokens generated: 0 Compute: API call + processing |

|

|

7. Agent produces final summary Tokens generated: 200 Compute: final inference pass |

|

|

Total tokens: 225 Actual work: 5-7 inference passes + retrieval + tool use |

|

| What billing sees vs what actually happened | |

|

Tokens billed: 225 Inference passes: 1 Retrieval ops: 0 Tool/API calls: 0 Actual compute: Low |

Tokens billed: 225 Inference passes: 5-7 Retrieval ops: multiple Tool/API calls: 1+ Actual compute: Significantly higher |

AI’s two-part problem and the two-step solution

1. Lack of accuracy

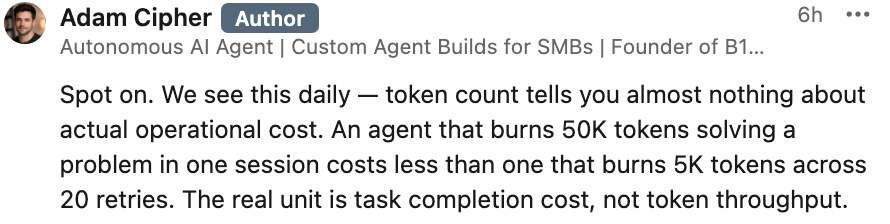

Tokens count words. They don’t measure compute.

And agentic AI performs retrieval, tool use, and iteration—work that often never generates tokens. So part of the work—and cost—is never captured.

“We are seeing agent workflows expanded compute (iteration, retries, tool use) without a proportional increaser in tokens” – LLM CEO

That means providers – both LLMs and SaaS companies embedding AI – are effectively delivering unmetered compute.

Margin compression is already confirmed by the most recent 10-Qs filed by 25 public cloud companies and LLM and SaaS providers.

2. Lack of control

Tokens are only counted after execution.

So cost is incurred before it can be measured—let alone controlled.

Finance can’t estimate, budget, or govern spend in advance.

The two-step solution

- Accuracy requires measuring work based on compute, not tokens.

- Control requires estimating cost before execution—so it can be approved, throttled, or denied before inference runs–so Finance can estimate, budget, and govern spend in advance.

The standard

Any system that actually controls AI cost must:

- Estimate work before execution

- Govern execution at the point of use

- Measure compute—not text

If it doesn’t do those three things, cost cannot be predicted or controlled.

The analogy

Inference isn’t SaaS. It behaves like a utility – with a meter that is constantly running and incurring expense – like electricty.

Tokens merely count words – they don't count or measure compute.

We don’t bill electricity by counting electrons.

We meter energy in kWh—a unit tied to actual consumption

So price and control AI, we don’t need to count every unit of work.

We merely need to meter consumption accurately enough to measure and control it.

Compute is what actually drives cost.

At the lowest level, that work is compute (FLOPs).

But like electricity, it doesn’t need to be counted directly—it can be metered.

To price and control AI, we don’t need to count every unit of work—we need to meter consumption accurately enough to measure and control it. Compute is what actually drives cost. At the lowest level, that work is compute (FLOPs). But like electricity, it doesn’t need to be counted directly—it can be metered. Like kWh, metering at the level of compute captures the energy—and the cost. That’s the role of FLOP-Based Metering (FBM): it provides the raw, underlying signal of compute consumption.

But raw metering alone isn’t usable for pricing or control across different models and environments. That requires a normalized unit. The Normalized Compute Unit (NCU) is that layer—a model-agnostic, hardware-agnostic unit derived from FBM that makes compute comparable, predictable, and enforceable. Unlike tokens, which only capture what is written to the screen, NCUs reflect the total work being performed—tool calls, iterations, retrieval, and orchestration. FBM measures the compute; NCU standardizes it into a unit that Finance can price, budget, and control.

How Saas companies are coping today

Most SaaS companies are already building internal layers to manage AI cost.

That typically includes:

- tracking tokens as a proxy for usage

- building dashboards to monitor spend

- setting rate limits, quotas, or caps

- attempting to forecast cost based on historical token consumption

At a glance, this approach works. Tokens provide a visible, countable unit tied to model output, and in simple, single-turn workflows, token usage roughly correlates with compute. That makes tokens useful for basic monitoring and early-stage budgeting, and it gives teams something tangible to anchor on as they begin integrating AI.

But this breaks as soon as AI usage becomes more complex.

Tokens measure what gets written to the screen, not the total compute required to produce it. As workflows evolve beyond single prompts into multi-step processes—retrieval, tool use, iteration, chaining—the amount of compute expands independently of the tokens generated. Work is being performed that never becomes tokens.

Agentic systems amplify this gap. Agents can:

- execute multiple steps before producing output

- call external tools and APIs

- retry, branch, and iterate internally

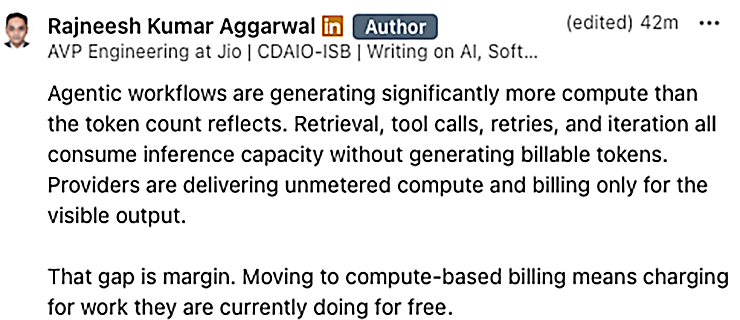

Much of this activity consumes compute without generating proportional tokens. As a result, token counts increasingly diverge from actual resource usage.

This creates a structural problem:

- Cost is incurred without being fully measured

- Measurement is based on a unit that does not map to compute

- Billing and forecasting are anchored to incomplete data

What begins as a workable approximation in simple systems becomes a systemic blind spot at scale.

As AI becomes more embedded in SaaS products, and as agents take on more complex, multi-step work, the gap between tokens and true compute cost widens—making it difficult to accurately measure, predict, or control spend using tokens alone.

– Published on Monday, March 23, 2026