Probabilistic vs. Deterministic: It’s not just an accuracy problem. It’s a cost problem.

By Alan Jacobson, AI Economics Strategist

Everyone is debating whether LLMs are accurate enough.

That’s the wrong question.

The deeper issue isn’t just correctness.

It’s predictability — of both output and cost.

Two different kinds of systems

At a high level, there are two ways software behaves.

1) Deterministic systems

- Same input → same output

- Fixed execution path

- Known number of steps

If you run it twice, you get the same result every time.

That means:

- you can test it

- you can audit it

- you can predict how much it will cost to run

2) Probabilistic systems

- Same input → potentially different outputs

- Execution path can vary

- Outcomes are generated, not computed

Run it twice, you may get two different answers.

That’s not a bug.

That’s how these systems are designed.

LLMs are probabilistic — by design

Large Language Models don’t “look up” answers.

They generate them by:

- predicting the most likely next token

- based on probabilities learned from data

That means:

- outputs can vary

- reasoning paths can vary

- length and structure can vary

There is no switch you can flip to make an LLM truly deterministic.

You can:

- constrain it

- guide it

- validate it

But underneath, it remains a probabilistic system.

Why this matters beyond accuracy

Most discussions stop here:

“Probabilistic systems may be less reliable.”

True.

But incomplete.

Because variability in output leads to something else:

variability in execution

And that has a direct consequence:

variability in cost

How probabilistic systems create cost

When outputs are not guaranteed, systems compensate.

They add layers:

- retries when outputs are weak

- validation checks against rules or schemas

- fallback models or logic

- human review for edge cases

Each of these steps:

- consumes compute

- adds latency

- increases cost

And critically:

the number of steps is no longer fixed

The hidden difference

Deterministic system:

- 1 input → 1 path → 1 cost

Probabilistic system:

- 1 input → multiple possible paths → variable cost

Even if the average cost is known,

the actual cost per execution can vary.

This is not just a technical issue

It’s a financial one.

Because companies don’t operate on averages.

They operate on:

- budgets

- forecasts

- controls

And those require predictability.

The compounding effect at scale

At small scale:

- variability is tolerable

At large scale:

- variability compounds

More usage →

- more retries

- more validation

- more edge cases

Which means more unpredictable cost

The real constraint

This is why the problem isn’t just:

“Are LLMs accurate enough?”

It’s:

Can systems built on probabilistic foundations produce predictable, controllable cost?

Connecting it back

AI introduces variable cost into systems that were built on fixed-cost assumptions.

Probabilistic systems are one of the reasons why.

They don’t just introduce uncertainty in output.

They introduce uncertainty in execution and cost

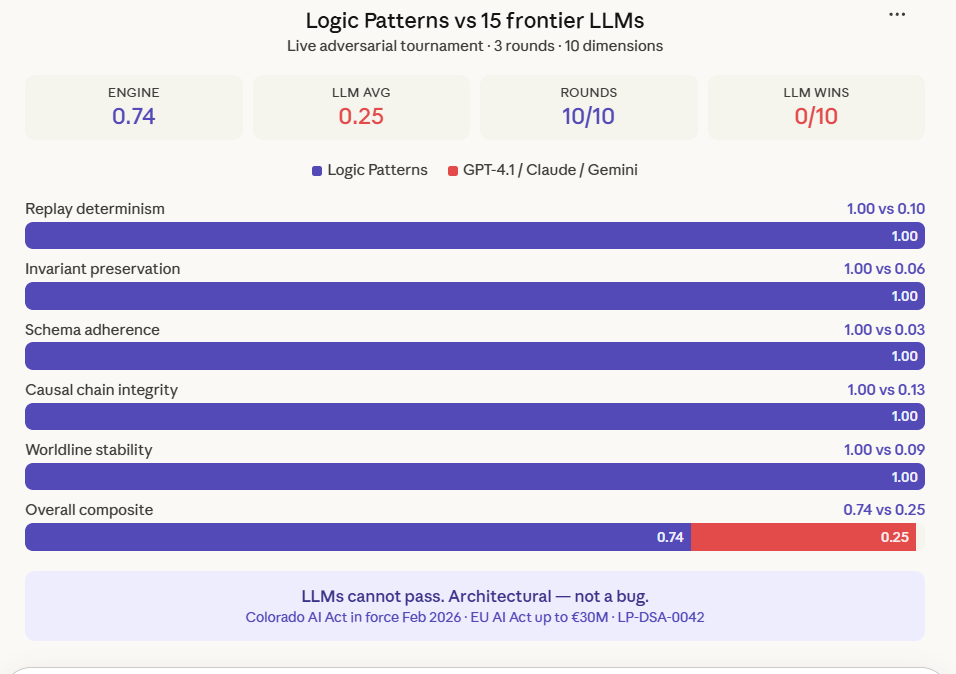

What needs to happen

To make AI viable at scale, systems must:

- constrain execution paths

- reduce variability

- introduce governance and guardrails

- bound cost per workflow

Not just improve model quality.

The bottom line

Probabilistic vs deterministic isn’t just an accuracy debate.

It’s a cost debate.

And until cost becomes:

- predictable

- controllable

AI will remain powerful…but financially difficult to scale.

– Published on Friday, March 20, 2026

Where is variability in AI output translating into unpredictable cost or spend?

Send signal: signal@revenuemodel.ai

I read every signal.