Loss-less memory is required for cost-savings, trust, and adoption

Today's LLMs do not remember reliably and do not improve based on governed, verified user input. Instead, they rely on Retrieval-Augmented Generation (RAG) to compress, discard and hallucinate their way around finite memory limits. Those limits are real and unavoidable. They cannot be iterated away.

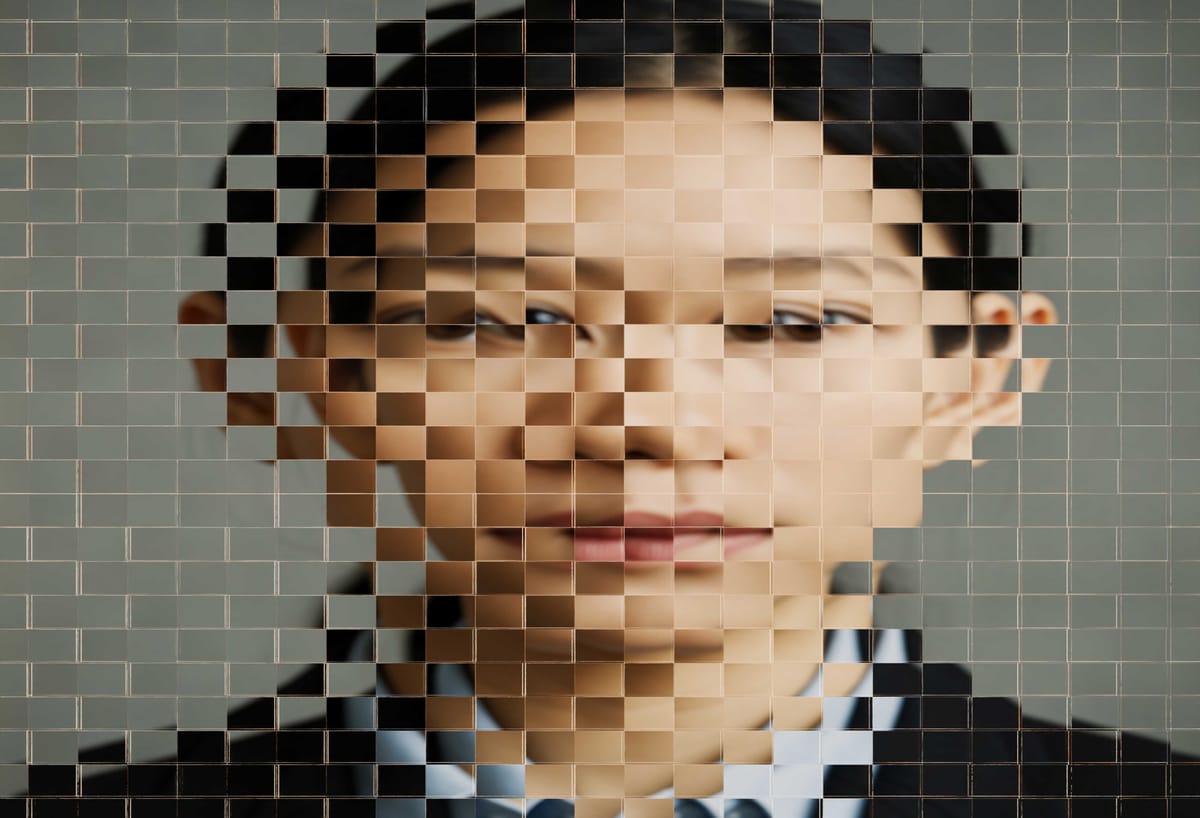

This workaround looks familiar. LLMs survive finite memory the same way JPEGs survived slow networks: through lossy compression. JPEGs throw away pixels. LLMs throw away facts. And at first glance, the loss is easy to miss.

But look closely at a JPEG and the damage becomes obvious: blurred edges, missing detail, compression artifacts that weren’t visible at first.

Look closely at an LLM’s output and the same pattern emerges. The seams show. The missing facts surface. The confident answer masks an incomplete or distorted underlying record. Once you notice those gaps, you understand what the system has really lost.

What JPEGs lose are pixels.

What LLMs lose is truth.

Without reliable memory, AI cannot be trusted. Without trust, AI cannot scale. And without scale, the market caps tied to AI infrastructure evaporate.

Users will not trust an agent that doesn’t remember. When memory is lossy or ephemeral, every interaction effectively resets. Context disappears. Confidence never accumulates. Users are forced to repeat themselves, recheck outputs, and treat the system as disposable rather than dependable.

Memory must become 100% loss-less. It is not a feature. It is the prerequisite for iterative learning and for any durable relationship between a user and an AI system.