Al costs can't be predicted or controlled—and that's the problem

By Alan Jacobson, AI Economics Strategist

Most businesses run on a simple premise:

- You approve your costs before you spend the money.

- You budget for them, so you can pay the bill when it arrives.

- And you control your costs before they become more than you can afford.

It's the only way a business can operate like a business.

But AI doesn't work that way

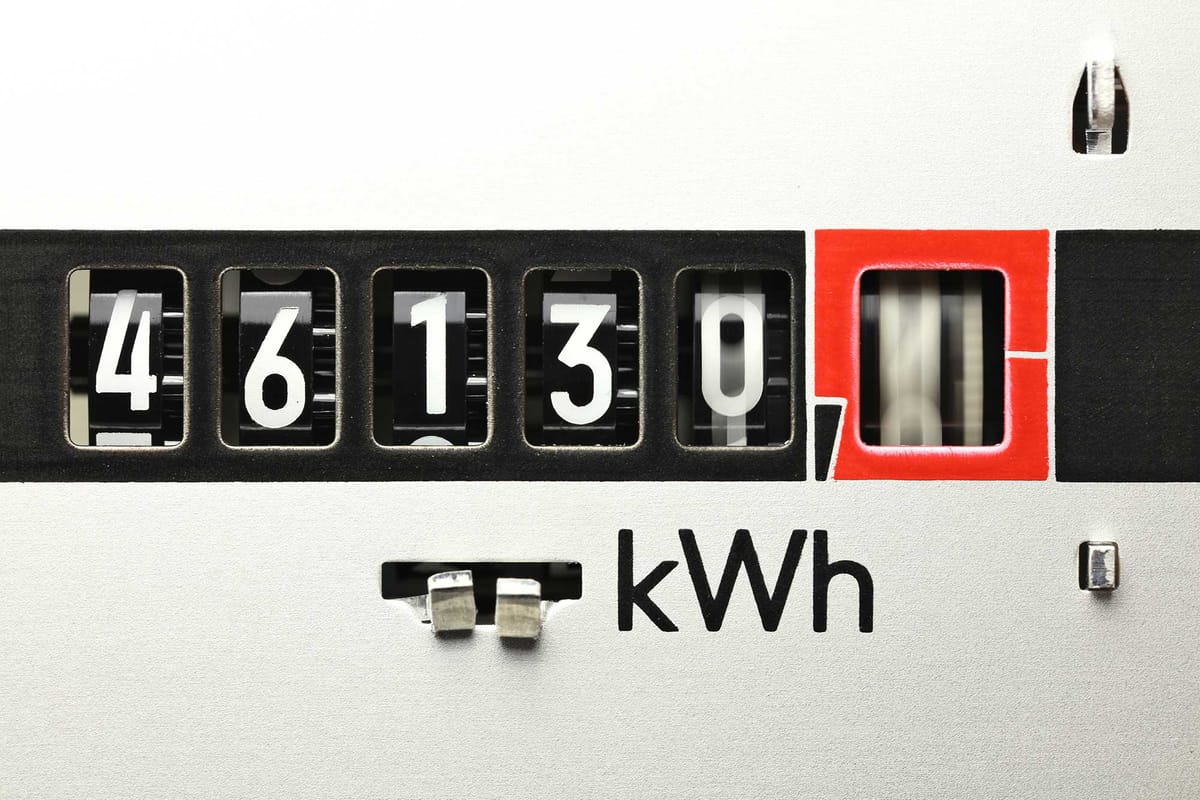

With AI, the cost isn’t known up front, because it isn’t determined until after the compute has been consumed.

So you run the query first.

And you get the bill later.

Which means:

- There’s no way to approve the cost before it’s incurred

- There’s no way to budget for it in advance

- And there’s no way to control it once it’s in motion

And because the cost can’t be predicted, it can’t be managed.

If you’re enjoying flat-rate pricing now, enjoy it while it lasts

Today, many enterprises still experience AI as a fixed cost.

They pay per seat and buy subscriptions.

From their perspective, cost looks stable.

But that stability is artificial.

Underneath, providers are incurring real-time, usage-based compute cost on every action and subsidizing their expense – short-term – in hopes of boosting adoption.

The pricing model hasn’t caught up to the cost model.

That gap is being absorbed — for now — by the provider.

It shows up as:

- lower gross margins

- higher cost of revenue

- increasing pressure to introduce usage-based pricing

This is not a permanent equilibrium.

Over time, pricing will move to match cost.

When it does, today’s fixed cost will become a variable expense.

Scale does not solve the problem

Most technology leaders assume scale will fix AI economics.

That assumption comes from how software has always worked – SaaS, search, social media and digital marketplaces all share the same economic model:

- High fixed cost upfront

- Near-zero marginal cost

- Margin expansion with scale

Once the product is built, each additional user costs almost nothing to serve, so…

- Revenue grows faster than cost.

- Margins expand.

But AI does not follow that model

Inference introduces a variable cost that persists at the unit level.

Every query, every generation, every agent action incurs compute – which cannot be predicted in advance.

That cost does not disappear with scale.

It compounds with usage.

So scale does not improve unit economics.

It amplifies them.

This is the inversion most teams miss.

In traditional software, scale separates revenue from cost.

In AI, scale tightly couples them:

- You can optimize infrastructure.

- You can reduce cost per token.

- You can improve model efficiency.

But none of those eliminate the fundamental dynamic: A variable cost accrues on every action.

That means the core SaaS assumption — that margins expand as usage grows — no longer holds.

Instead, usage drives cost of revenue in real time.

If pricing does not perfectly track that cost, margins compress.

If usage accelerates faster than pricing adjusts, compression accelerates.

This is already visible in twenty-five 10-Qs now

Companies like Cloudflare, C3.ai and GitHub are seeing margin compression of over 1,000 basis points after deploying AI.

Not because demand is weak.

Because the cost is incurred before it can be predicted, approved, or controlled – and scale doesn’t solve the problem.

Bottom line?

The problem is not the large language models.

The problem is the business model.

Where do you see this breaking first — pricing, usage, or margins?

Send signal: signal@revenuemodel.ai

I read every signal.

– Published on Friday, March 20, 2026