Agentic AI may render tokens obsolete as a unit of measure

By Alan Jacobson, AI Economics Strategist

This week, stories in The New York Times and The Wall Street Journal highlighted something that’s been quietly building inside companies: employees are deploying AI agents that generate massive volumes of tokens—and massive, unexpected costs.

The assumption behind most AI systems is simple: tokens approximate usage, and usage approximates cost.

That assumption is now breaking, because…

Tokens measure text.

Agents don’t generate a commensurate amount of text for the work they perform.

So agents use compute that isn’t reflected in the tokens they generate.

If tokens don’t track compute, pricing can’t track cost—and profit margins compress.

| Workflow A: Simple prompt/response | Workflow B: Agentic workflow |

|---|---|

|

1. User prompt: “Summarize this document” Tokens generated: 25 Compute: single inference pass |

1. User prompt: “Summarize this document” Tokens generated: 25 Compute: initial inference |

|

2. Model produces summary Tokens generated: 200 Compute: single inference pass |

2. Agent retrieves 5 documents for context Tokens generated: 0 Compute: embedding + vector search + ranking |

|

Total tokens: 225 Actual work: 1 pass |

3. Agent evaluates relevance, discards 3 docs, keeps 2 Tokens generated: 0 Compute: multiple inference passes |

|

4. Agent attempts first summary draft, determines it is insufficient Tokens generated: 0 Compute: inference pass |

|

|

5. Agent retries with a different prompt strategy Tokens generated: 0 Compute: inference pass |

|

|

6. Agent calls external metadata tool Tokens generated: 0 Compute: API call + processing |

|

|

7. Agent produces final summary Tokens generated: 200 Compute: final inference pass |

|

|

Total tokens: 225 Actual work: 5-7 inference passes + retrieval + tool use |

|

| What billing sees vs what actually happened | |

|

Tokens billed: 225 Inference passes: 1 Retrieval ops: 0 Tool/API calls: 0 Actual compute: Low |

Tokens billed: 225 Inference passes: 5-7 Retrieval ops: multiple Tool/API calls: 1+ Actual compute: Significantly higher |

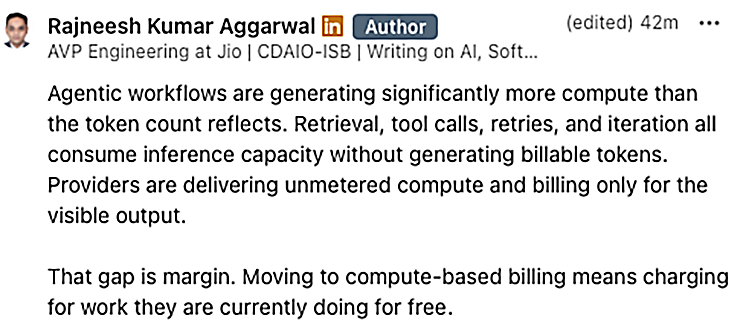

If you are counting tokens, you are flying blind for two reasons:

- Semantic blindness: Tokens count words, but they don’t understand meaning. A simple request and a complex task may use the same number of tokens — while requiring very different amounts of compute.

- Invisibility: Agentic workflows perform work off-screen — retrieval, tool use, iteration — that never becomes tokens at all.

So tokens measure neither the meaning of the work, nor the full amount of work performed.

You can count tokens, but you can’t count on them to measure, provision, bill or control compute.

To put it in historical context again, using tokens to measure compute is like measuring electricity in horsepower: It didn’t work after machines replaced horses.

Tokens are the horsepower of AI.

Compute is the kilowatt-hour.

In early AI systems—simple prompt and response—that approximation mostly held. One request triggered one model pass. Tokens were an inaccurate, albeit workable proxy for compute.

Agentic AI changes that.

A single request now triggers multiple steps:

- planning

- retrieval

- tool use

- validation

- retries

- sub-agents running in parallel

Each step requires compute. Each step reprocesses context. Each step adds work.

But not all of that work shows up proportionally in token counts.

The result:

Compute grows with execution depth.

Tokens do not.

This is the disconnect now surfacing in real-world deployments.

Tokens have never been an accurate proxy for compute. They were merely easy to count.

But in a world of compound execution, the gap becomes impossible to ignore.

A system can:

- reprocess the same context multiple times

- execute chains of model calls

- spawn parallel tasks

- run validation and retry loops

All of which consume compute—without a clean, proportional increase in tokens.

So while organizations can:

- count tokens

- monitor usage

- even reduce spend

They still cannot measure or control the underlying compute driving cost

And if cost cannot be measured correctly, it cannot be priced or controlled.

That’s why this is surfacing now.

Not because AI suddenly became expensive—but because agentic AI multiplies compute in ways tokens were never designed to capture.

The industry is still measuring output.

The cost is coming from execution.

Tokens just show the tip of the iceberg.

– Published on Sunday, March 22, 2026